Ananya Srinivasan

Computer Science @ University of Maryland

Hi, I'm Ananya! I grew up in Tokyo, Japan, and am now studying Computer Science at the University of Maryland. I'm deeply passionate about XR and am an avid hackathon-goer (I've won 4 hackathons!). I also started a new intercollegiate organization called PIXL this year to bring more women and underrepresented groups into AR/VR! You can see some of my experiences and projects below.

Experience & Internships

NASA Glenn Research Center

SWE/XR Development Intern (2x Intern) | Ohio, USA | Jan 2024 - Aug 2024

Designed an end-to-end mixed-reality flight simulator for the EPFD project in Unity with an AI copilot and flight-yoke integration, showcased to 700K+ at EAA Oshkosh (including Administrator Bill Nelson). Developed multiplayer AR experiences on HoloLens 2.

STEP Development Program Intern | Tokyo, Japan | May 2024 - June 2024

Built 6+ Java projects optimizing algorithms and data structures. Received mentorship on systems programming and memory management.

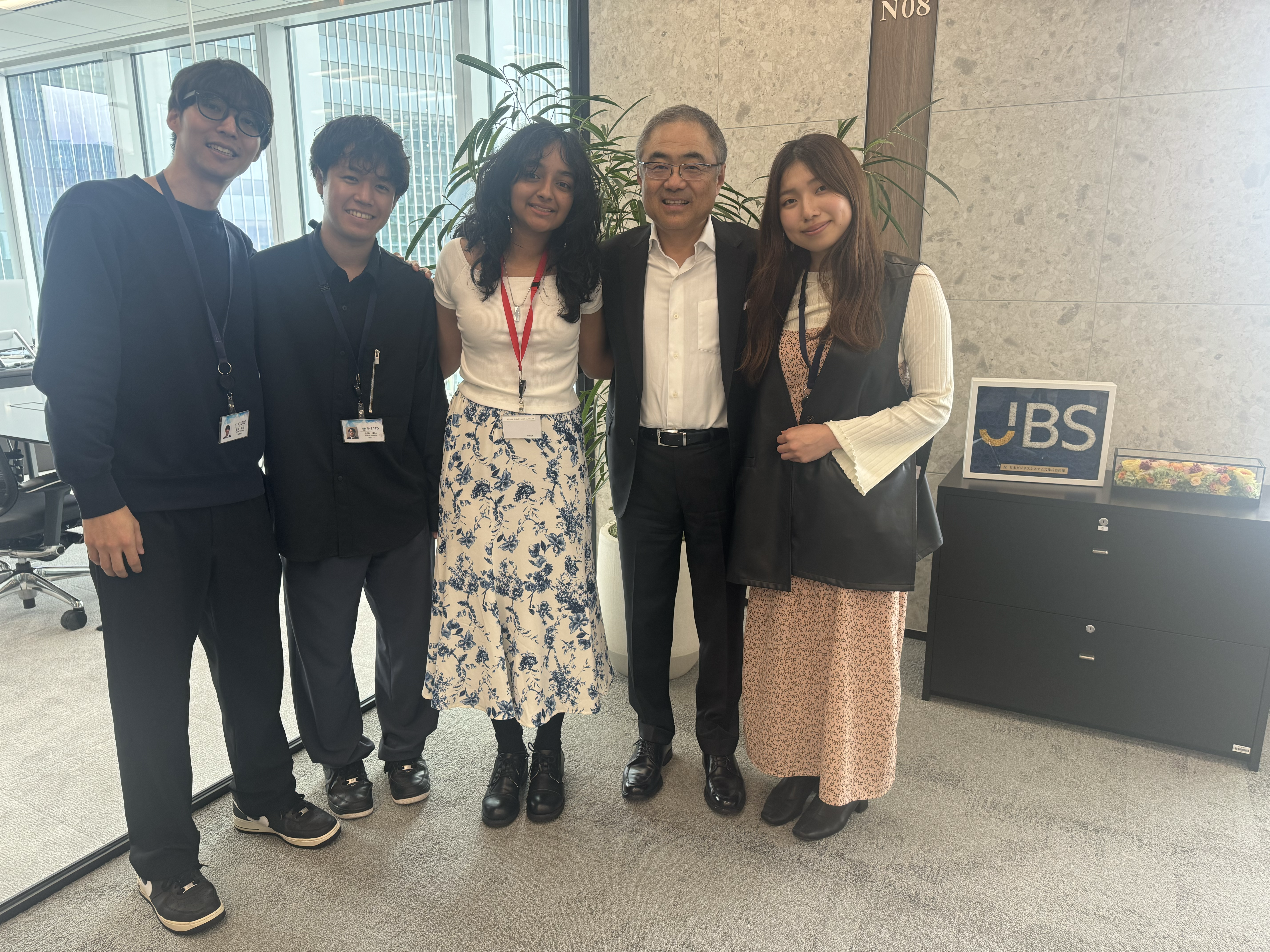

Japan Business Systems

AI and Data Science Intern | Tokyo, Japan | July 2024 - Aug 2024

Built an AWS-integrated chatbot connecting ChatGPT with 10,000+ internal documents via RAG pipelines.

University of Maryland & NASA

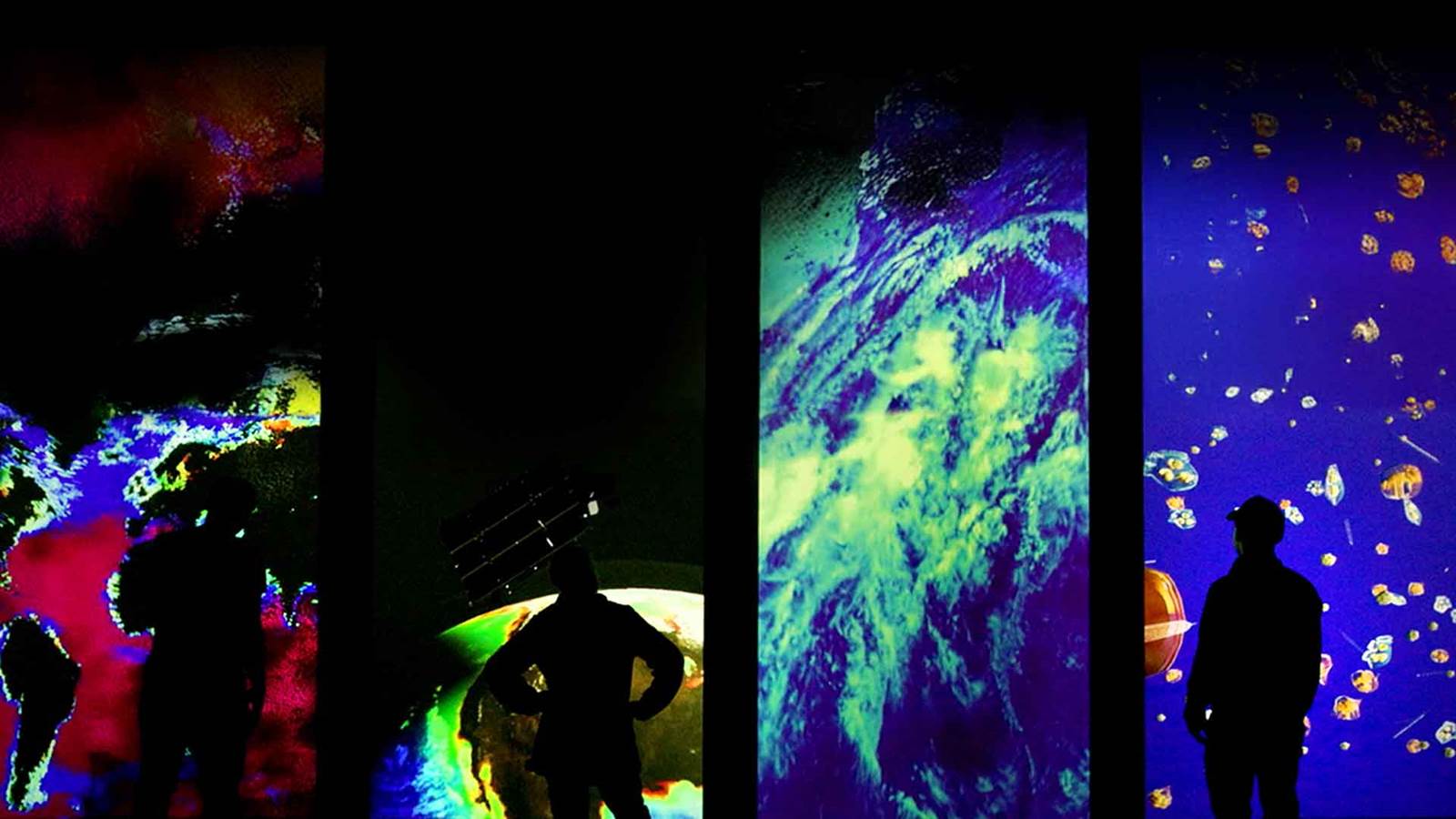

Motion Capture Systems & Data Science Researcher | Maryland, USA | Present

Developing markerless motion-capture systems in Unity/Captury. Built a Python ETL pipeline and real-time 3D visualization system for 23 years of NASA PACE data, showcased live at the Kennedy Center.

Kennedy Center Exhibit

Featured Projects

Rain With Me

Jan 2026Awards: MIT Reality Hack 2026 - XR Health and Wellness Design and Research Track 3rd Place.

A biosensing empathy tool and XR experience translating internal emotional states into shared physical realities through haptic feedback and AR weather visualization. Engineered a low-latency UDP architecture to fuse physiological data (GSR, HR) with Gemini Live API multimodal sentiment analysis.

DevpostSnapFinder

Event: 3EALITY x Snap Hackathon (Eindhoven, Netherlands)

An AR application for Snap Spectacles designed to locate misplaced small gear. Features hands-free, glanceable guidance including an on-lens directional arrow, hot/cold visual cues, and live distance tracking in centimeters. Built using Lens Studio and TypeScript, utilizing quaternion alignment for a stable UI, and an optional Python API for reference object registration.

DevpostNeurAgility

2025Awards: Immerse the Bay 2025 - Winner OpenBCI Neurotechnology Track (3rd Place) & Winner Virtuous Reality.

A multimodal exercise-coach VR game combining smartphone-based body tracking with EEG/EMG performance insights to monitor attention, muscle activation, and engagement levels using foundation models. Developed at the Immerse the Bay Stanford Hackathon.

DevpostKääntää

2025Awards: ImmerseGT 2025 - Lifestyle Track Winner & Snap AR 3rd Place Track Winner.

An AR language learning application for Snap Spectacles. Allows users to scan real-world objects to view definitions, pronunciations, and memory-recall prompts powered by voice inputs and Gemini.

DevpostBookwXRms

2025Event: MIT Reality Hack 2025.

A native Apple Vision Pro mixed-reality reading companion that brings physical books to life with dynamic 3D environments, curated soundscapes, and gamified interactive elements using image tracking and spatial occlusion.

DevpostLinear Algebra Visualization

N/AAn interactive 3D educational tool inspired by 3Blue1Brown, visualizing linear transformations, eigenvectors, and matrix multiplication in real-time.

GitHubLeadership & Community

PIXL (People, Interaction, and eXperience Lab)

Founder & President

Created an intercollegiate organization dedicated to bringing women, beginners, and underrepresented groups into AR/VR, HCI, and Game Development.

Visit PIXL